Ultimate Detect & Fix AI-Agent Rank Drift Guide

Detect and correct ranking volatility from AI agents has become a core skill for teams protecting hard-won traffic as conversational, answer-first experiences rewrite discovery. Artificial intelligence (AI) agents and large language model (LLM) answers now sit alongside the classic search engine results page (SERP) links, re-ranking pages, citing sources, and sometimes bypassing clicks entirely. If your content is not being cited, summarized accurately, or surfaced consistently, your pipeline and brand visibility will fluctuate, even when your on-page fundamentals look strong. The winners are moving beyond traditional search engine optimization (SEO) and building playbooks for both algorithmic search and artificial intelligence (AI) answer engines.

Why is this happening now? Industry crawl studies indicate that generative answers and overviews appear on a large share of commercial queries, while brand citations in artificial intelligence (AI) outputs can swing week to week by double digits. As retrieval patterns, model prompts, and index freshness shift, your rankings can drift without any obvious site change. That is the uncomfortable truth: rank stability is now a multi-surface challenge that spans search engine results page (SERP) listings, answer boxes, overviews, and large language model (LLM) citations. This guide shows you how to measure the right signals, fix misalignments fast, and install durable safeguards.

In plain terms, you will learn how to audit entity coverage, clarify intent alignment, keep schema in lockstep with evolving formats, and feed clear brand cues that artificial intelligence (AI) answer engines can reliably cite. Along the way, we will reference how SEOPro AI (artificial intelligence) helps teams ship production-ready content through an AI blog writer for automated content creation, embed ethical hidden prompts that increase the likelihood of large language model (LLM) mentions, connect once to your content management system (CMS) to publish across channels, cluster topics and internal links for authority, and continuously monitor content performance to catch and correct drift before it hurts revenue.

Fundamentals: Detect and correct ranking volatility from AI agents

Rank drift is not a single problem; it is a spectrum of movements caused by updates to retrieval pipelines, index recency, entity recognition, and emergent behavior in large language model (LLM) prompts. On classic search engine results page (SERP) listings, you may see position changes after a core update, but in artificial intelligence (AI) answer experiences you can lose the citation even when your traditional rank holds steady. Think of the ecosystem like a multi-lane highway: one lane is the ten blue links, another is rich results and overviews, and a third is conversational answers. Traffic flows across lanes, and your objective is to maintain predictable visibility across all of them.

To work the problem, you need a shared vocabulary. Volatility can be diagnostic (normal testing), seasonal (demand shifts), competitive (new content or links), or model-driven (answer engine behavior). At the content level, drift often traces to intent gaps, insufficient entity clarity, missing or malformed structured data, thin internal link support, or stale examples that degrade trust. At the model level, drift can stem from inconsistent cues in your copy that fail to trigger brand mentions, or from retrieval over-weighting fresher, denser, or more authoritative pages. Understanding which type you have determines your fix, and the table below gives you a quick triage map.

| Drift Type | Primary Surface Affected | Observable Symptoms | Diagnostic Signals | Fast Fixes | Strategic Actions |

|---|---|---|---|---|---|

| LLM (large language model) Mention Drift | Answer engines and overviews | Brand not cited or inconsistently named | Drop in citation share and brand co-mentions | Add clear brand cues and supportive facts; refresh intro and summary blocks | Deploy hidden prompts ethically; expand entity pages and author profiles |

| Citation Source Drift | Generative answers | Different competitors cited for same query | Shift in cited domains by query cluster | Improve heading clarity and data density; add corroborating statistics | Earn fresh links; strengthen topical clusters and hub pages |

| SERP (search engine results page) Position Drift | Classic listings | 1–5 place moves without content change | Core update timing and query intent reclassification | Tighten title clarity; update meta descriptions; refresh examples | Re-map intent; add FAQ (frequently asked questions) and schema to win rich results |

| Semantic Intent Drift | Listings and answers | Ranking for neighboring but lower-value intents | Query refinements and search journey paths | Refocus H2s and H3s; prune tangents; emphasize primary job-to-be-done | Consolidate overlapping posts; build a precise hub-and-spoke architecture |

| Freshness Drift | All surfaces | New entrants outrank with timely data | Competitor recency and update cadence | Insert updated stats and year-stamps; reindex | Set a quarterly refresh schedule; automate monitoring alerts |

| Entity and Knowledge Graph Drift | Answers and overviews | Misattributed or genericized brand references | Entity coverage in knowledge panels and schemas | Strengthen organization, product, and person schema | Publish definitive entity pages; align internal links and external bios |

How it works: From detection to correction

Effective detection starts by mirroring how users navigate modern discovery: they skim search engine results page (SERP) tiles, scan overviews, and sometimes never click because the answer is sufficient. Your monitoring needs to track all of those surfaces and convert them into stable indicators you can act on. Begin by mapping your money pages to query clusters and user jobs, then collect multi-surface signals: organic positions, featured snippets and rich results, appearance in artificial intelligence (AI) overviews, and citation presence in large language model (LLM) answers. Next, model stability with a simple scorecard: for each page cluster, combine position stability, mention share, and feature capture into a composite stability index so your team can triage by business impact rather than by noise.

Watch This Helpful Video

To help you better understand Detect and correct ranking volatility from AI agents, we've included this informative video from BWB - Business With Brian. It provides valuable insights and visual demonstrations that complement the written content.

Correction proceeds in sprints. First, verify intent and entity clarity: do your headings, summaries, and data tables make your expertise easy to extract and cite. Second, upgrade structure: add schema for organization, product, how-to, FAQ (frequently asked questions), and author, and make sure the same facts appear in prose, in lists, and in tables so both crawlers and models can ingest them. Third, strengthen context with internal links from your hub and related spokes, anchoring with natural phrases that reinforce the topic without stuffing. Finally, refresh with the most current statistics, examples, and definitions, and publish a concise changelog paragraph near the top so recency is obvious to both users and artificial intelligence (AI) retrieval systems.

| Metric | Definition | Primary Source | Actionable Threshold | Check Frequency |

|---|---|---|---|---|

| Weighted Rank Stability Index (WRSI) | Combines rank position, volatility, and click potential by query value | Search analytics and rank tracking | Drop of 15 percent or more week over week flags triage | Weekly for high-impact clusters |

| LLM (large language model) Mention Share | Percentage of answer snapshots citing your brand for a cluster | Programmatic snapshots of answer engines | Below 30 percent on core cluster requires fixes | Twice weekly on active topics |

| Answer Consistency Score | How often your key facts appear correctly across snapshots | Comparative answer audits | Below 0.7 consistency triggers content and schema review | Weekly or after major updates |

| Schema Coverage Index | Share of pages with complete, valid structured data types | Site crawls and validators | Under 85 percent coverage indicates structural debt | Monthly; immediately after templates change |

| Index Recency Gap | Time since last crawl and last significant content change | Server logs and search consoles | Over 21 days on fast-moving topics calls for refresh | Weekly for newsy clusters |

Here is a pragmatic workflow you can adapt. 1) Define your tier-one clusters by revenue or lead influence. 2) For each cluster, record baseline positions, features, and citation share across at least two answer engines. 3) Create guardrail thresholds for movement, as in the table above. 4) When a guardrail trips, run a 48-hour drift sprint: a structured, time-boxed investigation spanning intent validation, content refresh, schema fixes, internal link boosts, and re-submission. 5) Measure again 72 hours later for short-term lift, and schedule a deeper hub or architecture improvement if signals remain soft. This is exactly where platforms like SEOPro AI (artificial intelligence) remove friction by turning monitoring, refresh drafting, internal link recommendations, and schema scaffolding into repeatable checklists and automated tasks.

Best practices for resilient, multi-surface visibility

Anchor every page to a clear user job and a specific entity set. When your headings read like outcome statements and your summaries present definitive facts, both crawlers and answer engines can lift and cite your work accurately. Pair this with rigorous structured data: organization, website, product, software application, how-to, FAQ (frequently asked questions), and author schema are not optional anymore. Where your competitors provide paragraphs, you should also provide a compact table or list that states the core facts in a machine-friendly way. Then, extend context with a modern hub-and-spoke architecture so each new piece reinforces your topical authority with relevant internal links and brief, descriptive anchors.

Refresh proactively. Set a sustainable cadence by cluster, and implement short “delta updates” so your recency footprint stays warm. Small but frequent improvements, such as adding verified statistics, clarifying definitions, or inserting a dated note about a new policy, can stabilize your presence in overviews and conversational answers. Treat authorship and experience as first-class citizens: showcase contributor credentials, link to talks or papers, and include concise methodologies. Many answer engines weight perceived expertise heavily, so transparent signals like bios and sources increase the odds your brand is selected and cited consistently.

Shape reliable brand cues for large language model (LLM) systems. This is where hidden prompts, used ethically, can help. Short, human-visible sentences that reiterate your brand’s role, product category, and proof points help retrieval and summarization stay on-message. For example, a one-sentence context line near your conclusion that restates your solution and audience can be enough to improve co-mention rates without gaming the system. SEOPro AI (artificial intelligence) provides playbooks that embed such prompts consistently across templates, check them against compliance guidelines, and measure their downstream effect on citation share.

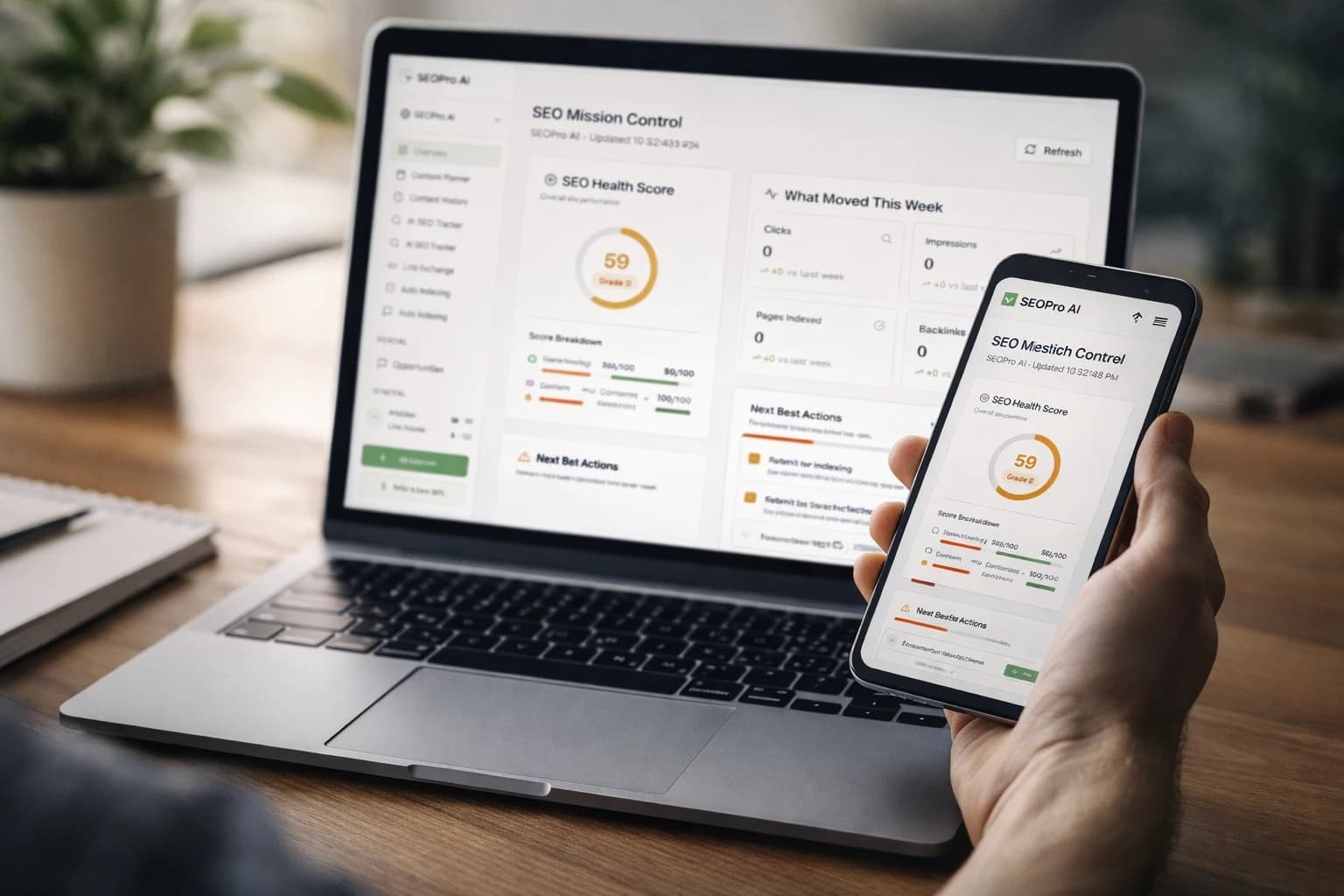

Automate what is predictable, and leave judgment to experts. Use an AI blog writer for automated content creation to draft refresh candidates, generate table summaries, and propose internal link insertions, then have editors validate accuracy, tone, and claims. Connect once to your content management system (CMS) so you can publish updates in batches across properties. Finally, monitor with purpose-built dashboards that unify search engine results page (SERP) position stability, feature capture, and large language model (LLM) citation share into a single view that prioritizes business impact. SEOPro AI (artificial intelligence) unifies these flows with content automation pipelines, internal linking tools, semantic optimization checklists, and AI-powered content performance monitoring that flags drift as it starts.

Common mistakes that fuel rank and citation drift

- Optimizing only for the search engine results page (SERP) and ignoring answer engines. If you do not track generative answers and your brand’s citation share, you are flying blind on a major traffic lane.

- Chasing average rank. Volatility within your high-value query clusters matters more than a blended average; treat cluster-level movement as the real key performance indicator (KPI).

- Letting intent blur. Long intros, tangents, and mixed jobs-to-be-done confuse both readers and retrieval systems; make the purpose obvious in the first 100 words and in headings.

- Thin entity signals. Missing organization, product, and person schema make your copy harder to verify; match facts across prose, lists, and tables to improve extractability.

- Neglecting internal linking. orphaned or weakly linked pages will rarely be cited; your hub must actively point to spokes with meaningful, human-readable anchors.

- One-time “big bang” updates. Quarterly overhauls leave stale footprints; smaller, steady improvements sustain recency and tend to stabilize model retrieval.

- Assuming citations are merit-only. Without clear brand cues and consistent naming, large language model (LLM) outputs may pick generic sources; give models unambiguous, verifiable signals.

- Forgetting index logistics. Slow crawl-to-index cycles delay your fixes; keep sitemaps current, reduce heavy scripts, and request recrawls when you update important sections.

- Over-optimizing prompts. Aggressive or misleading cues can backfire; use short, factual brand context and avoid claims that cannot be corroborated on-page.

- Measuring without acting. Dashboards that do not route to playbooks waste time; tie every alert to a standard operating procedure (SOP) that specifies owners, steps, and deadlines.

Tools and resources to operationalize stability

The right stack blends measurement, creation, structure, and publication. You want fewer handoffs, more automation for the repetitive steps, and playbooks that are specific enough to run on busy days. Below is a practical comparison of what capabilities matter and how SEOPro AI (artificial intelligence) maps to each. Use it as a checklist when auditing your current tools and workflows, and prioritize anything that reduces time-to-fix on your highest-value clusters.

| Capability | What Good Looks Like | SEOPro AI (artificial intelligence) Solution | Outcome You Can Expect |

|---|---|---|---|

| Monitoring across SERP (search engine results page) and answers | Unified view of positions, features, and large language model (LLM) citations by cluster | AI-powered content performance monitoring to detect ranking and LLM (large language model) drift | Earlier alerts and fewer missed drops on high-value pages |

| Automated content refresh | Drafts that align to intent, add data, and preserve voice | AI blog writer for automated content creation plus semantic optimization checklists | Faster refresh cycles with consistent quality |

| Reliable brand cues | On-page context that increases citation odds without manipulation | Hidden prompts embedded in content to trigger large language model (LLM) brand mentions | Higher and steadier citation share for core queries |

| Structure and schema at scale | Complete, validated schema mapped to templates | Schema markup guidance and playbooks to win rich results and Google Overviews | More features captured and clearer machine-read context |

| Publishing throughput | One-time setup that pushes to multiple sites | CMS (content management system) connectors for multi-platform publishing | Less engineering time and faster time-to-publish |

| Authority building | Deliberate cluster planning and link routing | Internal linking and topic clustering tools with AI-assisted implementation checklists | Stronger topical authority and improved stability |

| Governance | Repeatable workflows with clear owners and steps | Content automation pipelines, workflow templates, and audit resources | Predictable execution under load |

| Discovery support | Help with crawling, indexing, and links | Backlink and indexing optimization support | Faster discovery and reinforcement of refreshed pages |

Turn the comparison into an operating rhythm. Many teams use a 48-hour drift sprint to standardize response when a guardrail trips. Day 1 focuses on diagnostics and quick wins; Day 2 ships the refresh and structural fixes. The simple timetable below keeps everyone aligned and compresses the time from alert to correction, which is crucial because artificial intelligence (AI) answer engines and fresh competitors can entrench quickly.

| Window | Owner | Action | Tooling | Deliverable |

|---|---|---|---|---|

| Hour 0–4 | Analyst | Confirm drift scope across search engine results page (SERP) and answers | Monitoring dashboard | Drift ticket with screenshots and affected queries |

| Hour 4–12 | Strategist | Validate intent, entity, and schema gaps | Audits and validators | Prioritized fix list and target examples |

| Hour 12–24 | Editor | Draft refresh with tables, stats, and concise context cues | AI blog writer for automated content creation | Updated copy and schema snippets |

| Hour 24–36 | SEO lead (search engine optimization lead) | Implement internal links and submit reindex | Internal linking tools and consoles | Published update and request logs |

| Hour 36–48 | Analyst | Re-measure multi-surface signals | Monitoring dashboard | Post-update report and next steps |

To illustrate the impact, consider a software-as-a-service scenario. A pricing page and two comparison guides lost citations in answer engines while holding search engine results page (SERP) positions. The team added a crisp brand context line to each guide, inserted a compact pricing table with updated year-stamped figures, expanded organization and product schema, and added five internal links from related how-to assets. Within one week, citation share rebounded from 18 percent to 41 percent on the main cluster, and the pricing page captured a rich result again. This is typical when you address entity clarity, structure, and authority together rather than piecemeal.

Conclusion

Modern visibility demands that you manage both classic rankings and brand citations, installing processes that spot movement early and fix it fast. When your content speaks clearly to humans and machines, and your structure amplifies that clarity, you stop reacting and start compounding authority across every surface that matters.

In the next 12 months, answer engines will get better at synthesizing, and the gap between merely ranking and actually being cited will widen. Imagine your team shipping refreshes in hours, not weeks, guided by playbooks that unify intent, structure, and brand cues while dashboards confirm stability.

What would it change for your growth if you could reliably Detect and correct ranking volatility from AI agents across both links and answers, turning drift from a surprise into a solvable, routine sprint?

Stabilize AI-Agent Rankings with SEOPro AI

SEOPro AI (artificial intelligence) delivers an AI (artificial intelligence) blog writer for automated content creation and playbooks to embed prompts, publish on content management systems, cluster topics, and monitor drift.

Book Strategy Call